PNNL Researchers Lead High Performance Computing Workshop

PNNL researchers led workshop was part of the ISC High Performance 2022 conference

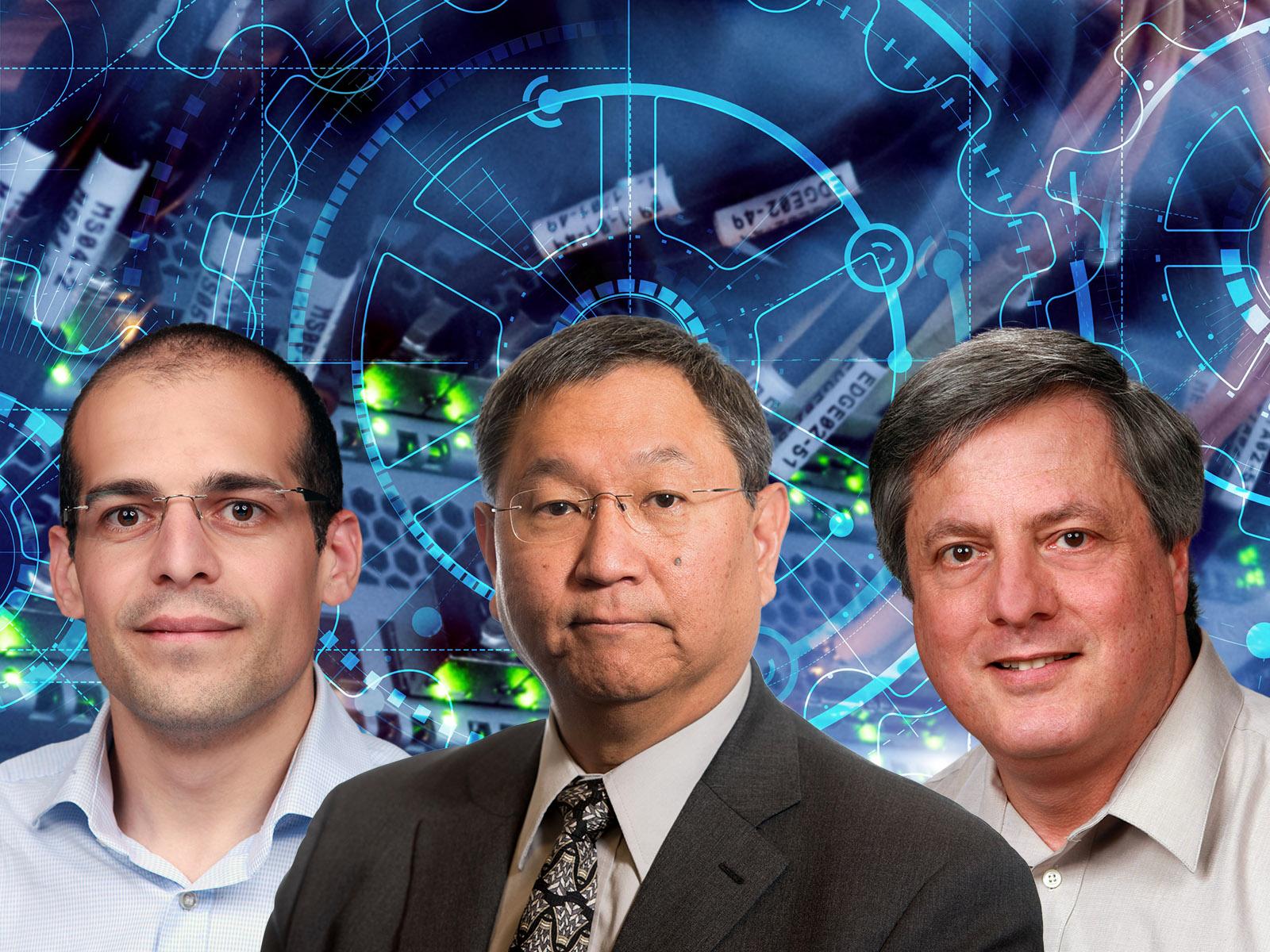

Pacific Northwest National Laboratory researchers from left to right: Marco Minutoli, James Ang, and John Feo.

(Composite image by Shannon Colson | Pacific Northwest National Laboratory)

Pacific Northwest National Laboratory (PNNL) computer scientists James Ang, John Feo, and Marco Minutoli recently led a workshop at the prestigious International Supercomputing Conference (ISC) High Performance 2022.

Their workshop, "Measuring the Effective Performance of High-Performance Computer Systems", featured several invited talks and a panel discussion on benchmarks and proxy applications to quantify performance and develop high-performance computing (HPC) systems for large-scale data analytic workloads. The goal of the workshop was to bring together experts from across the world to discuss what the next generation of benchmarks would look like for this important emerging class of applications.

“Traditionally, HPC systems for scientific computing would handle homogenous data from first principles,” said Minutoli. “Now, emerging workloads are data-driven with real numbers, strings, pictures, and sound. Current benchmarks aren’t able to measure this type of computing.”

Developing new methods, software technologies, and computer systems for large-scale data problems is a PNNL strength.

Research in graph and data analytics at PNNL helps scientists understand complex datasets. For example, PNNL researchers are developing combinatorial graph algorithms for several exascale application domains, including Earth systems, computational chemistry and biology, and the power grid as part of the Exascale Computing Project’s ExaGraph co-design center.

PNNL’s Data-model Convergence (DMC) Initiative advances scientific computing by integrating scientific modelling and simulation with data analytics methods like artificial intelligence/machine learning (AI/ML) and graph analytics.

Such graph-based strengths made PNNL a natural fit for the new Intelligence Advanced Research Projects Activity (IARPA) Advanced Graphic Intelligence Logical computing Environment (AGILE) program. Under AGILE, PNNL researchers design and develop codes to evaluate designs and predict performance of high-performance computer systems.

“With the changing landscape of scientific computing, new benchmarks are needed to make sure HPC systems are meeting the needs of the scientific community,” said Feo. “Different benchmarks, from single kernel to proxy applications, can help identify bottlenecks in HPC performance.”

Additional organizers for this workshop include: Torsten Hoefler from Eidgenössische Technische Hochschule (ETH) Zurich, Switzerland; Satoshi Matshuoka from the RIKEN Center for Computational Science, Japan; John Shalf from Lawrence Berkeley National Laboratory; Arun Rodrigues from Sandia National Laboratories; and William Harrod from IARPA. More information can be found on the workshop website.

Published: August 17, 2022