Thrust Areas

The RD2C initiative pursues two science thrusts. Both thrusts are integrated and interdependent, ultimately leading to new sensing and control designs that can be demonstrated in real-world applications in targeted critical infrastructure use cases.

Thrust 1: Cyber-Physical Systems Observational Science

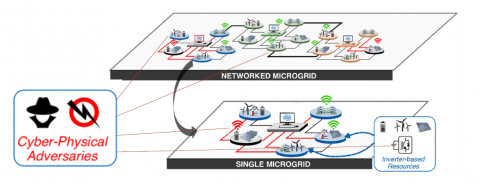

Thrust 1 acknowledges that multimodalities and complex interdependencies in cyber-physical systems give rise to emergent and ensemble behaviors under adverse conditions that are difficult to observe and characterize in real-world or demonstrated normal conditions. By understanding these systems at the levels of fidelity needed to mathematically model and represent these systems under adverse conditions, datasets can be generated, resilience properties can be measured, and new algorithms and control approaches can be validated.

Thrust 1 topics:

- Building a Multi-level Fidelity Experimentation Platform

- Experiment Methodology, Process, and Execution

- Controls Integration and Validation

Thrust 2: Designing Resilience for Sensing and Control

Thrust 2 recognizes that current sensing and control solutions need to evolve to meet the resilience needs of future critical infrastructures (CIs). These solutions are not context aware and are not robust or adaptive enough to assure resilience under adverse conditions in increasingly complex applications for future CIs. Leveraging the experiments, datasets, and validation capabilities of Thrust 1, the research efforts in Thrust 2 seek to develop novel approaches, theories, and algorithms that will lead to more resilient sensing and controls for CIs.

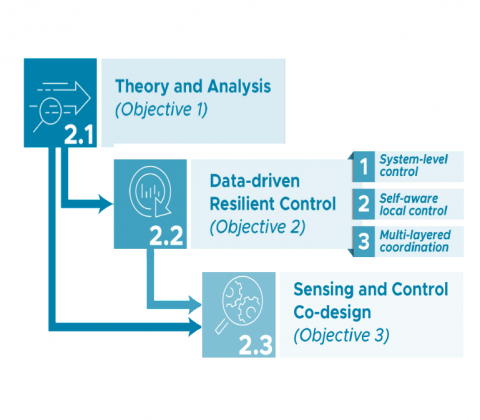

Thrust 2 topics:

- Control Theory and Analysis

- Data-driven Resilient Control

- Sensing and Control Co-design

Contacts

Thomas Edgar

Thrust 1 Leader

thomas.edgar@pnnl.gov

(509) 372-6195

Veronica Adetola

Thrust 2 Leader

veronica.adetola@pnnl.gov

(509) 371-6996