Teeguarden Part of a Report Offering Novel Paths to Assessing Health Risks

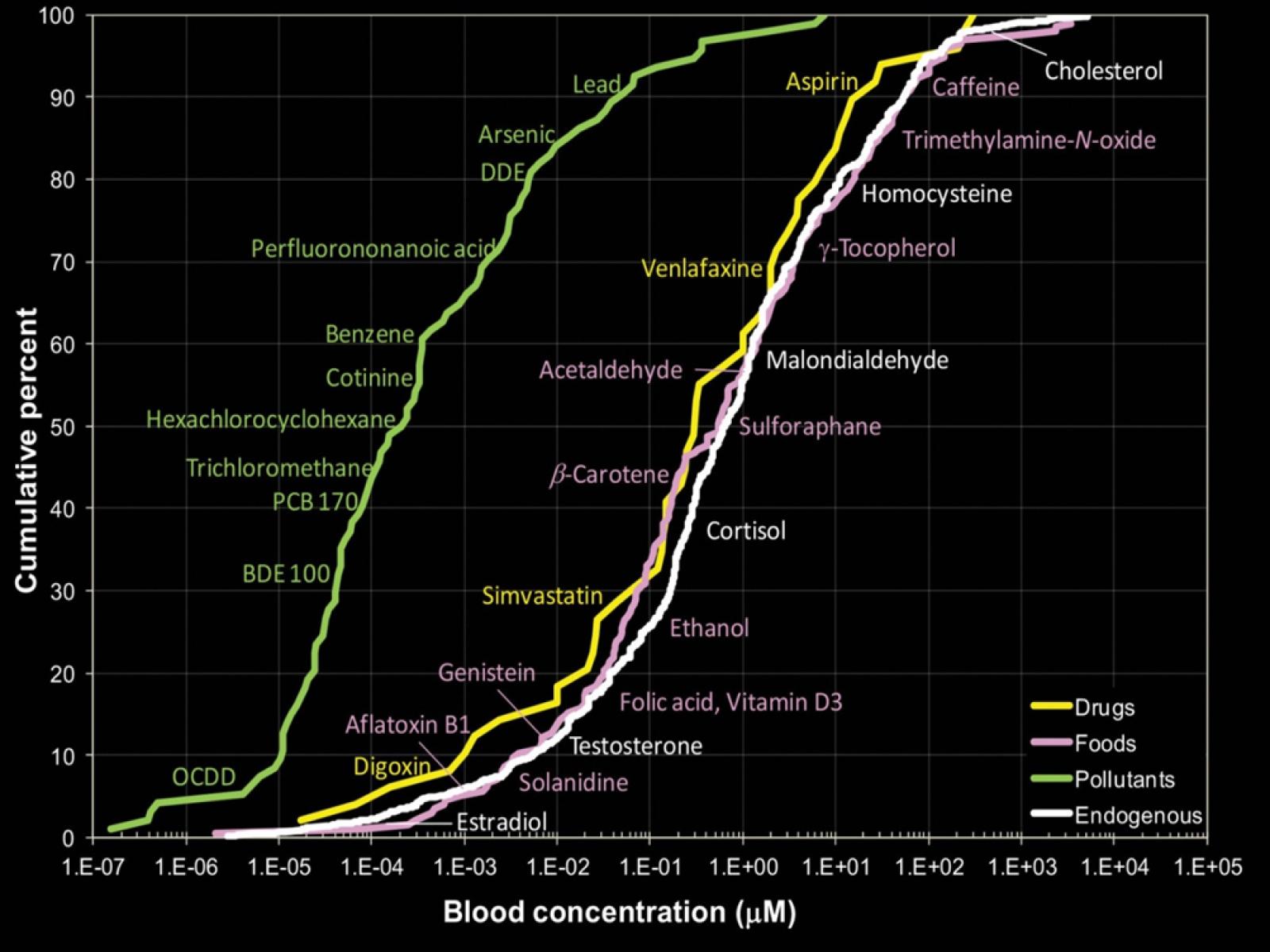

A survey of measured blood concentrations shows that for the selected chemicals, concentrations of pharmaceuticals and naturally present endogenous chemicals are similar and are generally higher than concentrations of environmental contaminants. The findings highlight the importance of using highly sensitive analytical instrumentation to characterize human exposure.

Source: Rappaport et al. 2014.

When a house burns down, many factors could be at play: a fire in the fireplace, a wooden frame, a strong wind, and a missing alarm. No one factor, by itself, is enough to cause the catastrophe.

So it is with the origin of human disease: Many factors, not just one, result in an adverse outcome. This complexity, though not a new idea, was acknowledged anew by a recent report on better ways to assess public health risks, published Jan. 5 by the National Academies of Sciences, Engineering, and Medicine.

PNNL toxicologist Justin Teeguarden, an expert in human exposure assessments involving chemicals, was part of a committee of 18 scientists contributing to the 216-page study, "Using 21st Century Science to Improve Risk-Related Evaluations." Three years in the making, its introduction, five chapters, and detailed recommendations will inform how federal agencies integrate major advances in exposure science, toxicology, and epidemiology in order to arrive at better ways of understanding the many factors that contribute to disease.

"These reports can transform fields and influence organizational strategy in the public and private sector."

-Justin Teeguarden

Teeguarden was lead author of the report's chapter on exposure science, and contributed to cases studies - a mainstay of the report - that he said were "intended to provide practical advice" for decision-makers in federal agencies, such as the Food and Drug Administration and the Environmental Protection Agency.

The report used the analogy of the house fire to illustrate what scientists call the sufficient-component-cause model, which is one way to describe the "multifactorial nature of disease." According to the report, that complexity - including the sum of genetic, environmental, and life-stage factors that play a part in the onset of human disease - should now be addressed by bringing together emerging new tools and methods that give scientists insight into the 100,000 chemicals routinely used in commerce but largely untested for health effects. To make decisions about human exposures, said Teeguarden, means making "the best use of all the big data emerging now - on more chemicals, more biomarkers, and more biological effects."

These new data emerge from new tools and methods, including personal sensors that capture individual exposures; advanced computational techniques for estimating chemical exposures in the absence of exposure-measurement data for humans; transgenic animals used to investigate specific exposure questions; and multi-omics methods of analysis that have transformed molecular epidemiology by investigating the underlying biological factors that often complement empirical observations.

Some of these new tools relate to strengths on the PNNL campus, said Teeguarden, including modeling and data analytics, analytical chemistry, toxicology, and the multi-omics analyses made possible by instrumentation and experts at the Environmental Molecular Sciences Laboratory, a national science user facility.

Being part of the National Academies report, he added, was "a lab investment" that reflects a long-term commitment on the part of PNNL to allow its experts the time and travel necessary to weigh in on important issues in science.

The resulting studies, tables of data, and recommendations are almost never "highly flashy," said Teeguarden, but they are consequential to how science policy is conceived and delivered. "These reports can transform fields and influence organizational strategy in the public and private sector."

Key Capabilities

Published: January 9, 2017