Optimizing Computer Communications

Researchers created a methodology to design customized network topologies

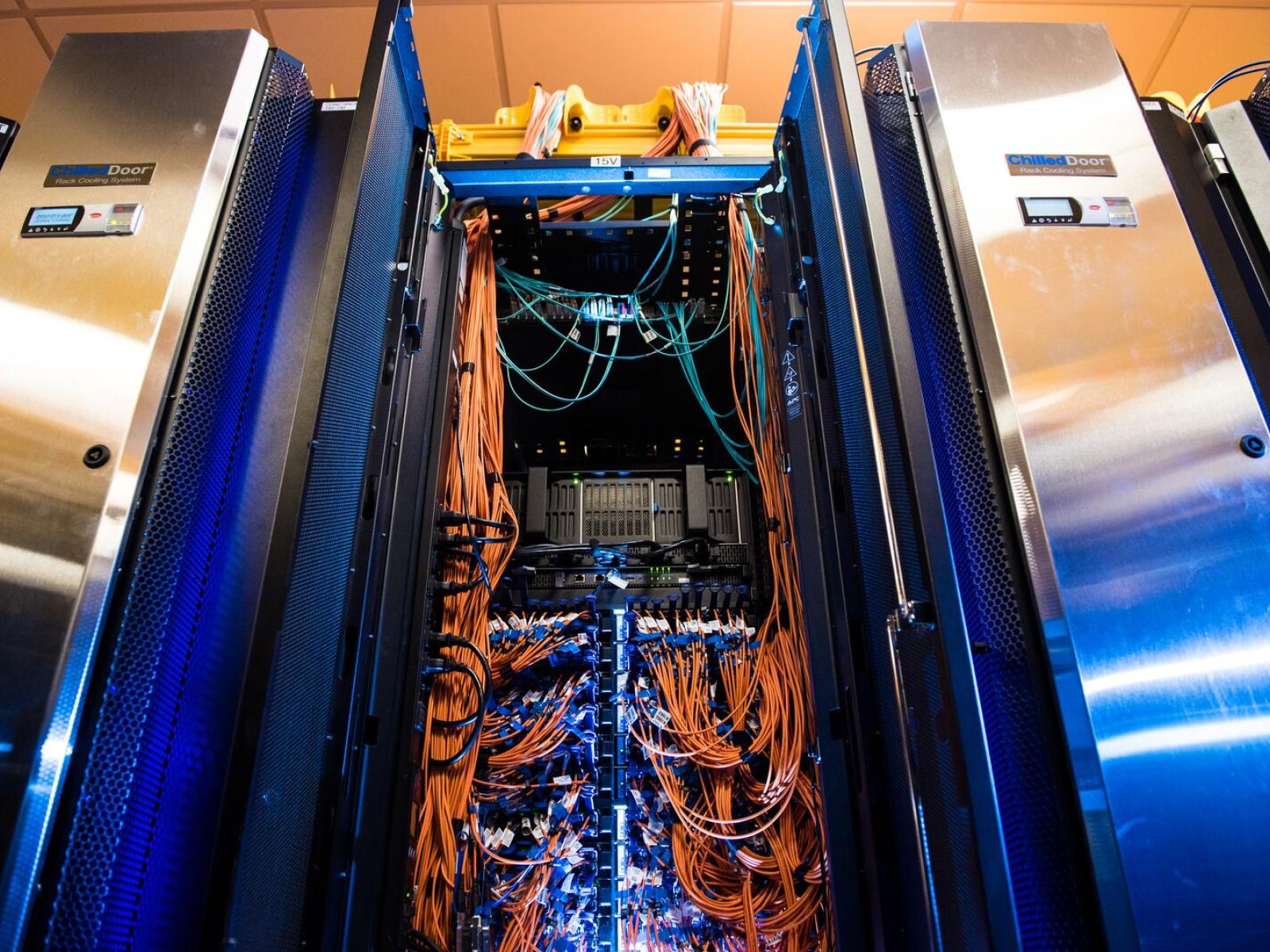

The hardware infrastructure (including the cabling seen here) to enable rapid communication between computers is a significant fraction of the cost of modern high-performance computing resources.

(Photo by Andrea Starr | Pacific Northwest National Laboratory)

Computers have revolutionized the way science is done. For example, chemists can calculate the dynamics of molecules, and Earth scientists can make more accurate predictions of weather and climate events. As the computations become more complex, so too do the computer architectures needed to perform the calculations.

“Modern scientific workloads are so big, researchers need lots of computers to run them,” said Stephen Young, senior research mathematician at Pacific Northwest National Laboratory (PNNL). “The problem is, getting computers to communicate quickly is expensive.”

To address this, Young—along with PNNL computer scientists Joshua Suetterlein, Jesun Firoz, Joseph Manzano, and Kevin Barker—proposed a way to make computer communication more efficient. Their methodology received a best paper award at the IEEE High Performance Extreme Computing conference.

The PNNL team used the Structural Simulations Toolkit, developed at Sandia National Laboratories, to find out the optimal arrangement of computer nodes for different scientific workloads.

For some problems, the ideal topology—the pattern of compute nodes connected via network cables—is straightforward. However, for workloads involving artificial intelligence or graph analytics, any node could need information from any other node. The topology for these high-communication workloads has a particular impact on performance, as nodes without a direct connection must communicate through one or more “hops”. This means lots of waiting for nodes to communicate, particularly if the nodes are far away from each other.

“We wondered, if we had access to the communication structure of a workload, could we find out what the best topology for that workload could be?” said Manzano.

The researchers used a genetic algorithm-based approach to design customized network topologies to improve the execution time for both physics-based and graph-based applications. The success of their methodology on both workflows holds promise that it can improve the runtime of next-generation hybrid scientific workflows as well. These workflows would integrate physics simulations with a data analysis and machine learning phase to refine the simulation for scientific discovery goals.

“Though this work is still in the early stages, our results are promising for future systems,” said Young. “We could be looking at a future of adaptively tuning high-performance computing systems as we go.”

This research was supported by the Department of Energy, Office of Science, Advanced Scientific Computing Research program as part of the Center for Advanced Technology Evaluation.

Published: November 17, 2023