Machine Learning Engineering

Table of Contents

- What is machine learning engineering?

- History of machine learning engineering

- Why does machine learning engineering matter?

- Benefits of machine learning engineering

- Limitations of machine learning engineering

- Future applications of machine learning engineering

- Machine learning engineering at PNNL

What is Machine Learning Engineering?

Machine learning engineering is a branch of engineering that implements ongoing data science developments to address complicated or intricate problems with large amounts of disparate data. This work bridges the gap between software engineers who build data platforms and data scientists who focus on developing algorithms and advancing machine learning models. Machine learning engineers often have specific domain knowledge to understand models and algorithms in addition to strong data and software engineering backgrounds, so they can deploy solutions to real world operations and retrain models incrementally over time. Through this, machine learning engineers enable computing systems to learn from data, identify patterns, and make decisions with reduced human monitoring.

Machine learning engineers build solutions for users to meet goals through managing the inherent messiness in large amounts of data. Often this means automating tedious experimental, monitoring, or analytic activities to reduce the time experts spend making sense of increased data from ever-improving technology. However, putting a machine learning engineering platform into operation is a continuous process, where the machine learning engineers respond when algorithms don’t perform as expected when the amount of data increases from experimental amounts. This includes identifying and mediating biases or misconceptions in algorithms as they are applied. Additionally, machine learning engineers often work to train computing systems to provide explanations for their reasoning, aspect of trustworthy machine learning.

With the right applications, machine learning engineers can work with computers to separate useful data from noise, increase the predictive power and efficiency of computational models, and aid in decision making to address important national and global issues. In turn, this means more cost-effective and far-reaching outcomes that benefit disparate areas ranging from cybersecurity to human health.

History of machine learning engineering

Researchers, data scientists, and software engineers have been using machine learning engineering to coordinate their algorithms since the beginnings of machine learning in the 1950s. However, the expansive growth of machine learning engineering can be traced back to a few key events and trends in the 2000s. First, in 2006, Geoffrey Hinton and two of his colleagues published a paper that outlined a much faster method for training deep neural networks. The second major advancement saw a significant speedup around 2010 of Graphical Processing Units (GPUs), the computer architectures that drive our modern video cards. At this same time, a new computer competition was presented at the Institute of Electrical and Electronics Engineers (IEEE) Computer Vision and Pattern Recognition Conference in 2012. The competition, called ImageNet, challenged participants to submit algorithmic approaches that could sort a database of over a million images. From our vantage in 2022, this may seem simple or routine because most people are used to interacting with vision-based programs such as Pinterest or the facial recognition on Meta. However, at the time, this was a grand challenge that seemed insurmountable.

The breakthrough came when a researcher combined deep neural networks with the much faster GPUs for his entry to the ImageNet competition. Neural networks “learn” by layering hundreds or thousands of algorithms where the output of one algorithm becomes the input for the next one, making it possible to model the non-linear and complex relationships in the real world. However, these layered algorithms require vast amounts of computing power that made using them unworkable until GPUs could be used to process many pieces of data simultaneously. This new approach gained signification advantage over others and within a few years, every ImageNet submission used neural networks. By 2017, the majority of teams had a greater than 95 percent image recognition accuracy. The conference chose to sunset the ImageNet challenge, but the field of computer vision—and approach to machine learning engineering—was transformed.

Why does machine learning engineering matter?

Machine learning engineering helps drive systems for fraud detection on credit cards and the reliability of a secure electric grid. It is highly successful for health care in radiography and X-ray scanning. One International Business Machines Corporation (IBM) study exploring the detection efficiency of lymph node cancer cells found that the combined input from a sophisticated artificial intelligence (AI) system and pathologists dropped the AI’s error rate by 7.5 percent, while dropping the human pathologists’ 3.5 percent error rate to a mere 0.5 percent. Machine learning engineering is also found in Meta’s facial recognition, which cross correlates trillions of images from a billion users to create meaningful predictions. Machine learning engineering informs recommendations on Netflix or social media platforms like Instagram, Pinterest, and Tik Tok. Many people also encounter machine learning engineering applications in their daily life through voice recognition programs like Apple’s Siri or Amazon’s Alexa, where highly specialized algorithms and architecture work together to rapidly ingest, process, and analyze enormous amounts of data.

Machine Learning Engineering is useful any time a process requires understanding the entire pipeline of incoming data, how the data is interpreted, a systematic way to store results, and the ability to run models in parallel to synthesize information about complex problems. These applications are used to automate the process of interpreting data from scientific experiments, real-time sensors, and environmental monitoring. Purpose-built machine learning engineering applications are continually being developed for operational environments to advance from machine learning done on a single workstation in a single location to integrated machine learning algorithms used concurrently in multiple high tempo, high uptime distributed environments. There is a large potential for errors in these complicated environments, and machine learning engineering can help make the transition from proof of concept through development and testing to deployment for real world users and systems.

Benefits of machine learning engineering

One of the largest benefits of machine learning engineering is its ability to automate routine processes. From scanning millions of shipping containers at a port of entry to monitoring fluctuations in electricity demands, well implemented machine learning has the potential to improve the quality of life by augmenting human intelligence.

For example, operators experience cognitive depletion when working long shifts. Doctors may lose focus when they must scan hundreds of images to locate areas of interest or concern. And new instruments create so much data that scientists can’t begin to interpret everything by hand even if they were to spend an entire lifetime doing so.

Many of these monitoring or pattern recognition processes are well suited to computers and machines, which do not fatigue as they perform simple tasks again and again. As computer scientist and technology entrepreneur, Andrew Ng said “If a human can do it in a couple of seconds, then an algorithm can do it.” And as machine learning engineers building algorithms and pipelines to automate systems in real time, they can improve outcomes for both the quality of gathered data as well as the types of jobs humans will be able to perform, getting rid of the need to perform mentally taxing tasks involving consistent repetitions.

By delegating more remedial tasks to machines, humans can shift to interpreting, diagnosing, and implementing information to fulfill objectives in research, decision making, connectivity, and health care, among many other fields. Computers will never take our jobs, but well executed machine learning engineering can make humans more effective and increase their satisfaction at work.

Limitations of machine learning engineering

Although advances are being made, machine learning engineering will likely always fall short when problems require human creativity and dynamic thinking, particularly when making predictions. Machine learning relies on pattern recognition to create predictions for the future, but when conditions quickly change, the nuances of human intelligence excel. This is clear in the example of Zillow’s failed attempt to use their machine learning engineering tool Zestimate to create a house flipping business around the time of the COVID-19 pandemic in 2020. Assessing and predicting home values is difficult in the best of circumstances because price depends on the type and condition of the house in combination with difficult to describe variations in physical location and both the seller and buyer’s timing. During the pandemic, changes in human movement combined with supply chain issues, evolving safety requirements, and an unpredictable market crippled the tool’s ability to accurately forecast which homes could be money makers. As a result, Zillow shut down their home flipping venture.

It important to remember that machine learning engineering applications will only ever be as accurate and complete as the algorithms and data pipelines they rely on. Researchers must work with computers to obtain the appropriate biases to look for desired patterns without adding unintended conclusions that may skew results and outcomes. The same machine learning engineering systems that work on an experimental scale may act differently when they are exposed to larger amounts of data at a faster rate. Because of this, engineered machine learning systems require their designer’s continued involvement as they work to understand and mitigate these unintended biases to guide their applications towards their original intent.

Future applications of machine learning engineering

Excitingly, machine learning engineering is still in early stages. In the coming years, researchers expect a proliferation of machine learning engineering in fields outside tech and social media. This means that machine learning engineers could contribute to more efficient drug design and discovery, autonomous systems, smart homes, and higher capacity energy storage materials, among virtually any other field.

As machine learning innovates in an expanding number of disciplines, machine learning engineering will be needed to integrate the efficiency of machine learning into a productionized scale. In addition to new machine learning approaches developed in tandem with domain experts, the future of machine learning engineering could include both new ways of deploying data pipelines and increasing processing speed. Advances in cloud engineering contribute to efforts to automatically deploy data at scale, and developments in heterogeneous compute power push results from a centralized device or location to disparate locations and various types of devices.

It is difficult to predict all area that machine learning engineering will touch, but people can expect to see direct machine learning engineering applications in their everyday lives in addition to the effects of machine learning engineering that trickle down from research and industry.

Machine learning engineering at PNNL

PNNL is leading the next generation of computing for scientific discovery.

Explore our Computing & AI story

PNNL’s work in machine learning engineering advances contemporary data analytics and artificial intelligence in both science and national security.

Our work in few-shot learning quickly builds machine learning models using small amounts of training examples in image, text, audio, and video datasets. This allows researchers, analysts, and decision makers to glean significantly more information from expensive, time-intensive, or high risk situations or experiments.

Digital and physical system security benefit from our holistic machine learning engineering approach for research in unexpected system behavior. This includes unexpected behavior due to maliciously modified inputs, hardware systems used to train and deploy machine learning algorithms, and the downstream effects of bias in standardized training data or pre-trained models.

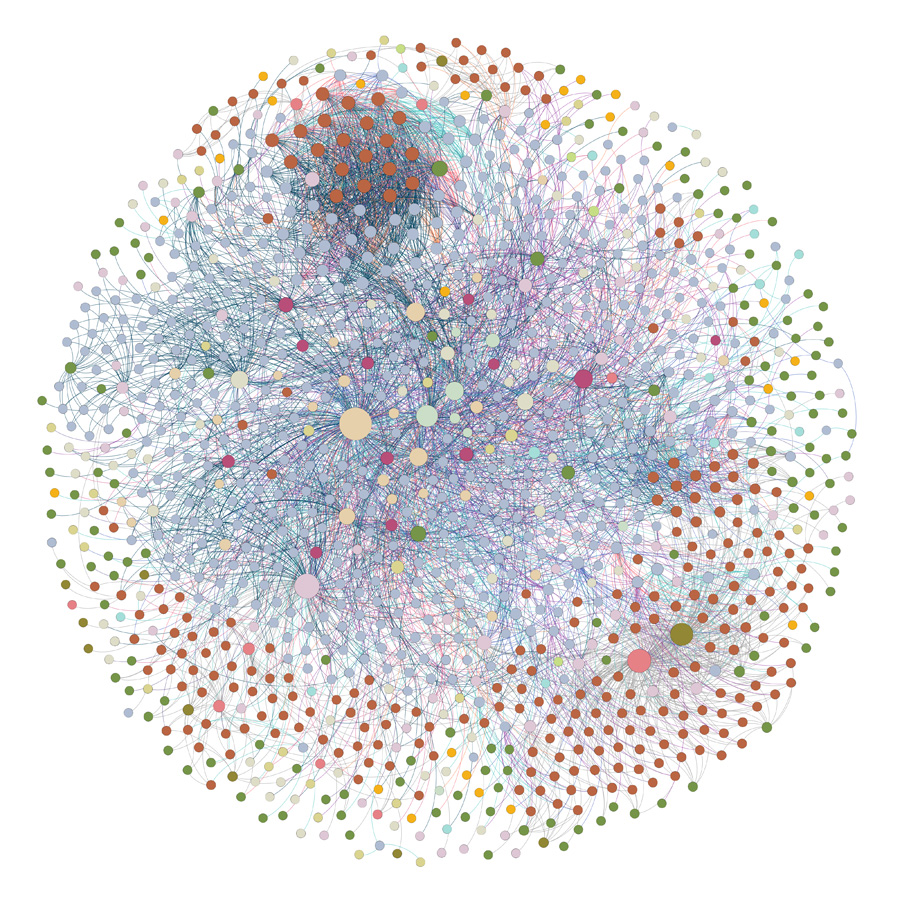

We use our research in computational topology to build novel mathematical methods in a range of applications, from sensor fusion and anomaly detection to pattern detection and visualization of complex data. For example, our HyperNetX open-source Python library analyzes and visualizes multiway relationships modeled as hypergraphs. These hypergraphs expose the interconnectedness of the data in areas such as cybersecurity, computational biology, geolocation, and pattern of life analysis without artificially generating two-way relationships.

PNNL data engineers perform mission critical R&D that enables U.S. Customs and Border Protection (CBP) to analyze the vast amounts of data generated by sensors located in thousands of vehicle and cargo inspection systems at ports of entry around the country. The cloud-based data pipeline we are developing for analysis of non-intrusive inspection data from cargo at US border crossings will enable CBP to leverage cutting-edge artificial intelligence and machine learning approaches to detect contraband, prevent smuggling, and secure the border.

PNNL has also been a trailblazer in the open-source data analytics space for the better part of the last decade, delivering advanced research and development solutions to sponsors across the national security landscape. These tools and capabilities advance research into understanding deception and misinformation campaigns in social and open-source media.