Advanced Computing, Mathematics and Data

Research Highlights

December 2012

Most Scalable Work Distribution Strategies

Load-balancing algorithms achieve the most scalable approaches to balance work

Efficiently utilizing all processor cores available is essential to fully utilize a supercomputer. Work-stealing and persistence-based load-balancing algorithms have been developed to allow large numbers of processors to quickly rebalance workload to keep all of them busy.

Results: Supercomputers consist of hundreds of thousands to millions of processor cores. Current users of such systems, like computational scientists, are required to balance their work among a large number of cores. Balancing work is an extremely challenging and time-consuming procedure for many applications. Researchers at Pacific Northwest National Laboratory and the University of Illinois have designed the most scalable algorithmic approaches to balance distribution of work amongst computer processor cores, persistence-based load balancing, and work-stealing.

Why It Matters: Effectively utilizing all processors directly impacts the rate at which science results can be produced. When using a supercomputer, a scientist will receive the fastest results when all processors are fully utilized, shortening the time to solution. Automating this process allows for reducing the effort required by an application scientist, such as a physicist or chemist, to use supercomputers effectively so they can spend more time focusing on their domain research results to solve the world's most intractable problems.

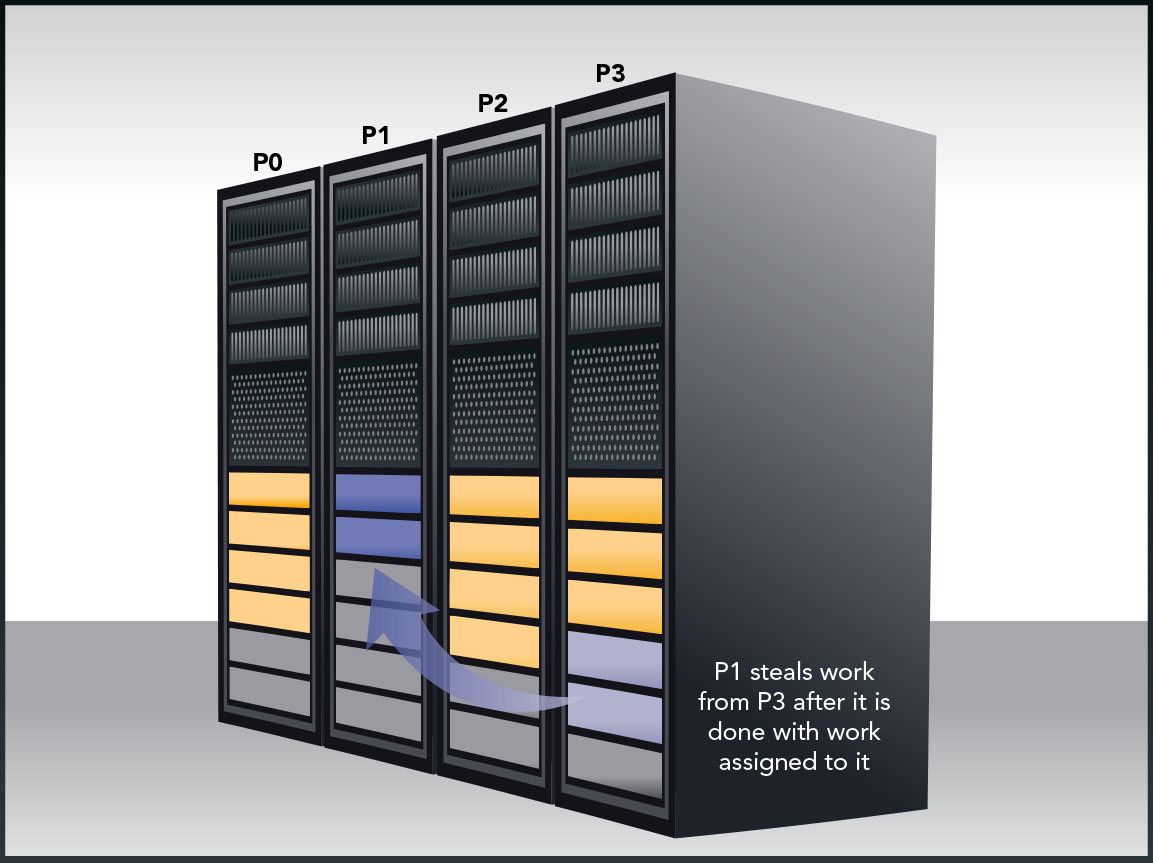

Methods: Supercomputing applications often evolve over time and require continuous updates and distribution of work assigned to each processor throughout the time that a job is in execution. The team found that persistence-based load balancers and work-stealing algorithms improve the efficiency of solving these problems by actively attempting to find and redistribute work. Like a road map, persistence-based load balancers continually check for the best route for processors to complete a given set of tasks, redistributing the work to be performed based on measured performance profiles from previous iterations. In work stealing, the calculations to be performed are automatically balanced across processors by having idle processors grab work units from other busy processors. Used in combination with, or as an alternative to, persistence-based load balancing, work-stealing is an attractive alternative for applications with severe imbalance of workloads that need to be corrected or cannot be easily profiled. Researchers demonstrated that for the coupled-cluster quantum chemistry application, this approach is an effective way to achieve reduced time-to-solution on several leadership-class computing systems, including the NERSC Hopper at Lawrence Berkeley (146,400 cores), Argonne's Intrepid (163,840 cores), and on Titan at Oak Ridge (128,000 cores).

What's Next: Computer architectures are constantly changing, and new computer science approaches will be needed to continue to use application codes at the highest levels of efficiency possible. Designing architecture-specific load-balancing methodologies remains an active area for computer science research.

Acknowledgments: This work was supported in part by the DOE Office of Science, Advanced Scientific Computing Research program, and by PNNL's Laboratory Directed Research and Development program through the eXtreme Scale Computing Initiative at Pacific Northwest National Laboratory. This research used resources of the Argonne Leadership Computing Facility at Argonne National Laboratory, the National Energy Research Scientific Computing Center, and the Oak Ridge Leadership Computing Facility at the Oak Ridge National Laboratory, which are supported by the Office of Science of the U.S. Department of Energy.

Research Team: Drs. Sriram Krishnamoorthy from PNNL, Jonathan Lifflander and Laxmikant Kale from University of Illinois Urbana-Champaign.

Reference: Lifflander J, S Krishnamoorthy, and L Kale. 2012. "Work Stealing and Persistence-based Load Balancers for Iterative Overdecomposed Applications." ACM Symposium on High-Performance Parallel and Distributed Computing 137-148. DOI:10.1145/2287076.2287103.